可解释AI

相关发表

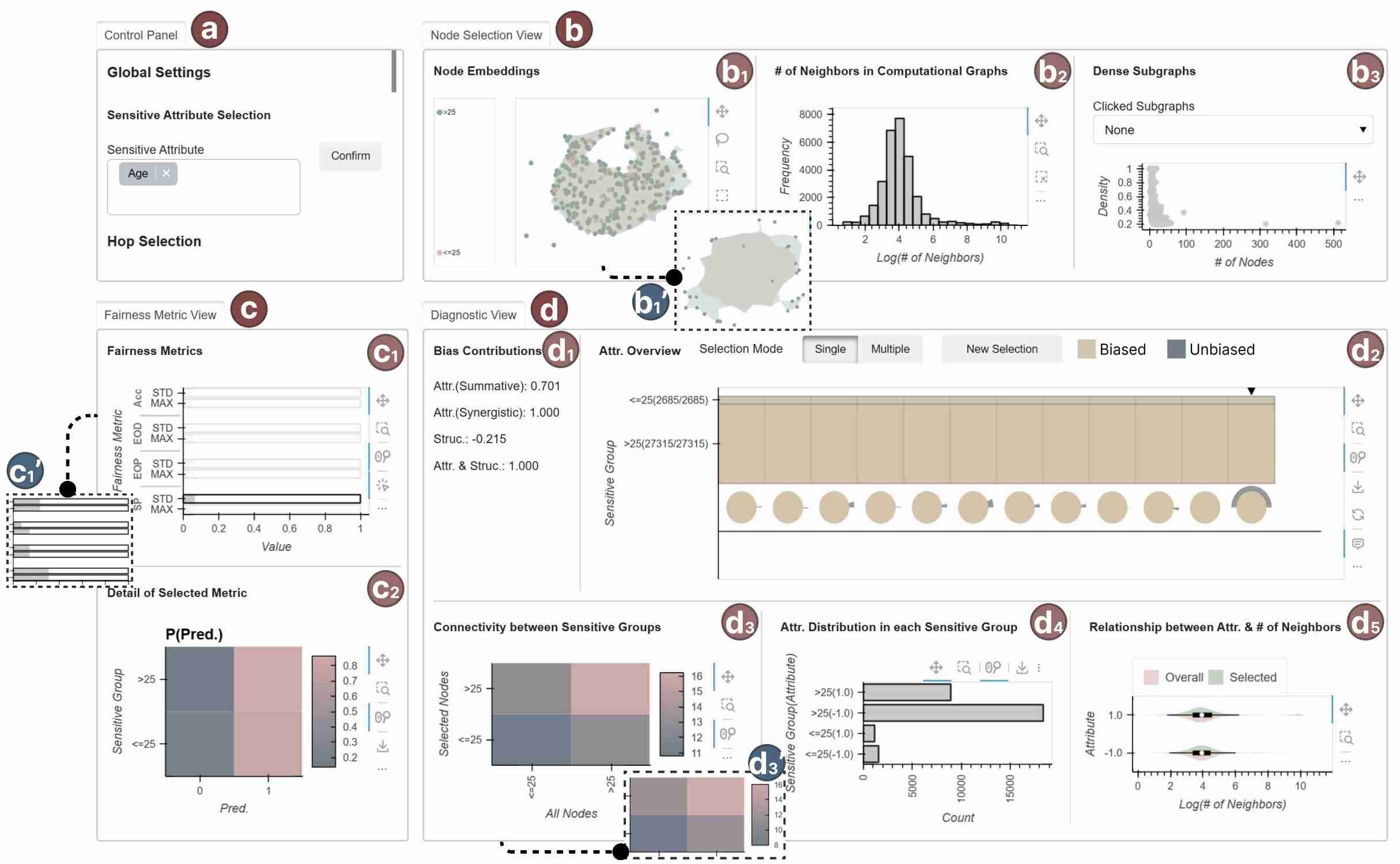

GNNFairViz: Visual Analysis for Graph Neural Network Fairness.

Xinwu Ye, Jielin Feng, Erasmo Purificato, Ludovico Boratto, Michael Kamp, Zengfeng Huang, Siming Chen*.

IEEE Transactions on Visualization and Computer Graphics (TVCG), Accepted, 2025.

Xinwu Ye, Jielin Feng, Erasmo Purificato, Ludovico Boratto, Michael Kamp, Zengfeng Huang, Siming Chen*.

IEEE Transactions on Visualization and Computer Graphics (TVCG), Accepted, 2025.

InterpretStack: Interpretable Exploration and Interactive Visualization Construction of Stacking Algorithm.

Yu Wang, Jing Lu, Le Liu, Junping Zhang, Siming Chen*.

VLDB Workshops 2024.

Yu Wang, Jing Lu, Le Liu, Junping Zhang, Siming Chen*.

VLDB Workshops 2024.

GraphInterpreter: a visual analytics approach for dynamic networks evolution exploration via topic models.

Lijing Lin, Jiacheng Yu, Fan Hong, Chufan Lai, Siming Chen, Xiaoru Yuan

Journal of Visualization, 2024.

Lijing Lin, Jiacheng Yu, Fan Hong, Chufan Lai, Siming Chen, Xiaoru Yuan

Journal of Visualization, 2024.

Interpreting High-Dimensional Projections With Capacity.

Yang Zhang, Jisheng Liu, Chufan Lai, Yuan Zhou, Siming Chen*.

IEEE Transactions on Visualization and Computer Graphics (TVCG), Accepted, 2023.

Yang Zhang, Jisheng Liu, Chufan Lai, Yuan Zhou, Siming Chen*.

IEEE Transactions on Visualization and Computer Graphics (TVCG), Accepted, 2023.

Visual Explanation for Open-domain Question Answering with BERT.

Zekai Shao, Shuran Sun, Yuheng Zhao, Siyuan Wang, Zhongyu Wei, Tao Gui, Cagatay Turkay, Siming Chen*.

IEEE Transactions on Visualization and Computer Graphics (TVCG), Accepted, 2023.

| Paper | pdf (14.0MB) | Video | mp4 (25.3MB)

Zekai Shao, Shuran Sun, Yuheng Zhao, Siyuan Wang, Zhongyu Wei, Tao Gui, Cagatay Turkay, Siming Chen*.

IEEE Transactions on Visualization and Computer Graphics (TVCG), Accepted, 2023.

| Paper | pdf (14.0MB) | Video | mp4 (25.3MB)

- © FDU-VIS All rights reserved. 2026